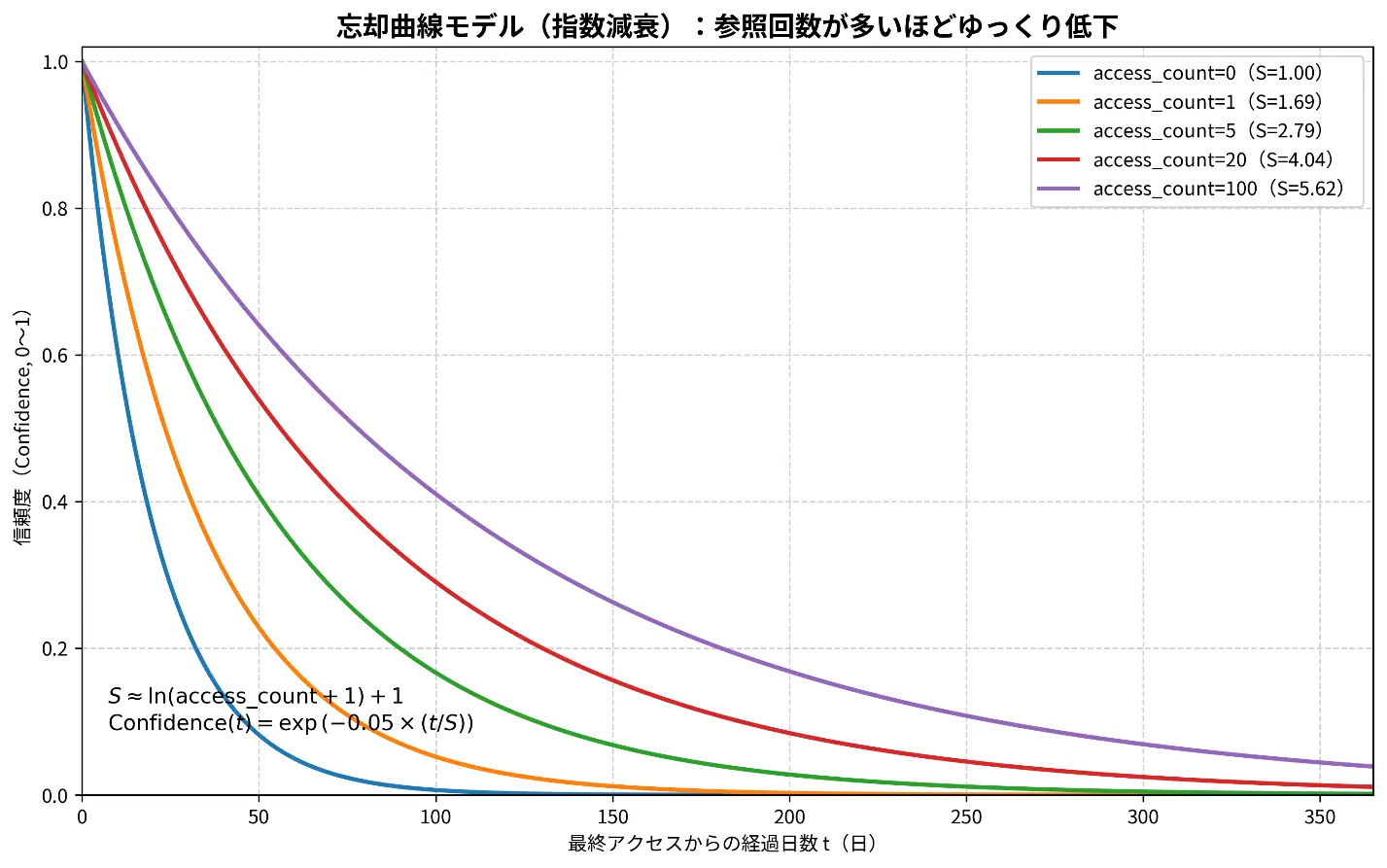

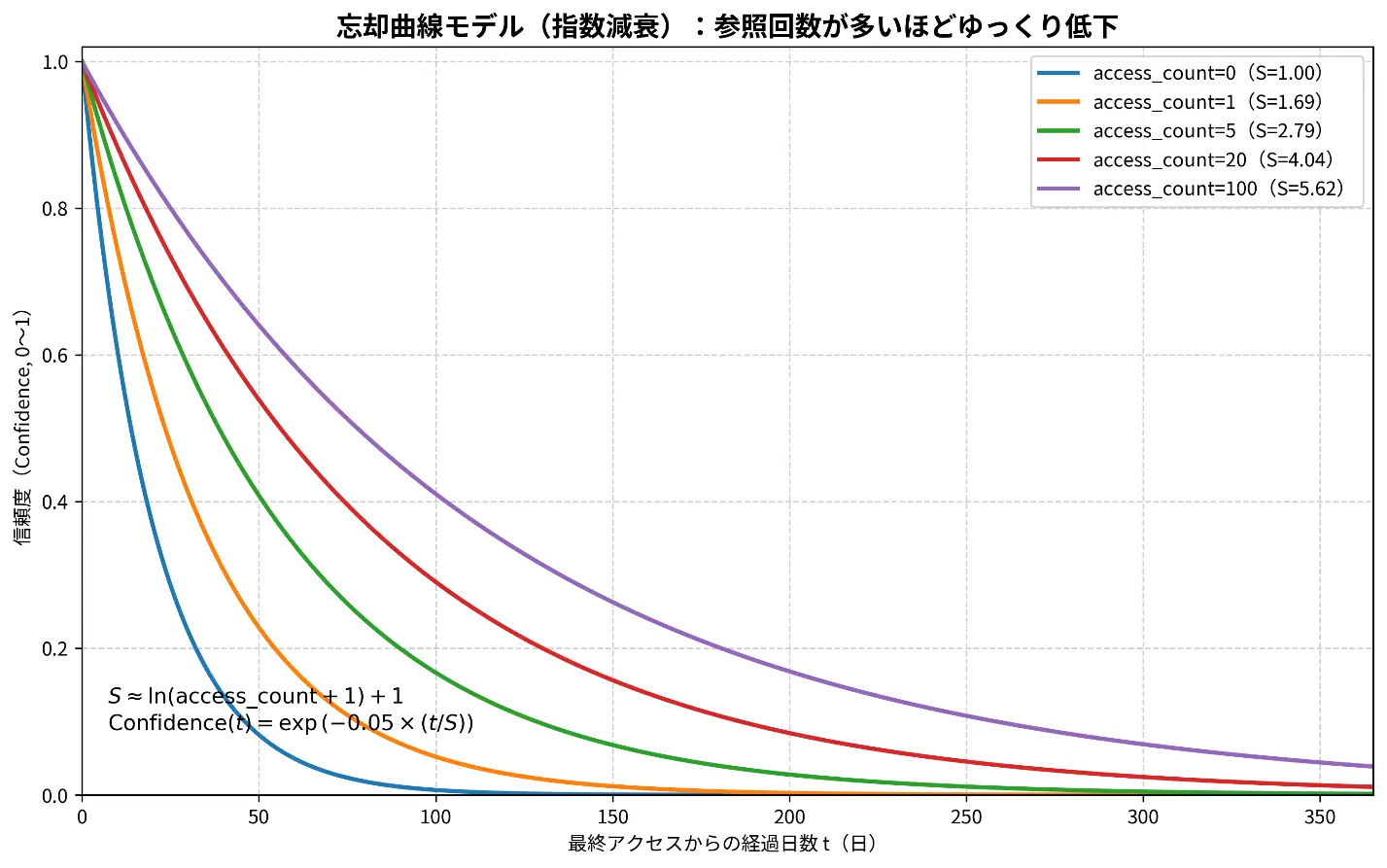

Memory System

Separates long-term profile memory and short-term context memory for better personalization.

Loading Research Page

Please wait a moment...

Research / Graduation Thesis

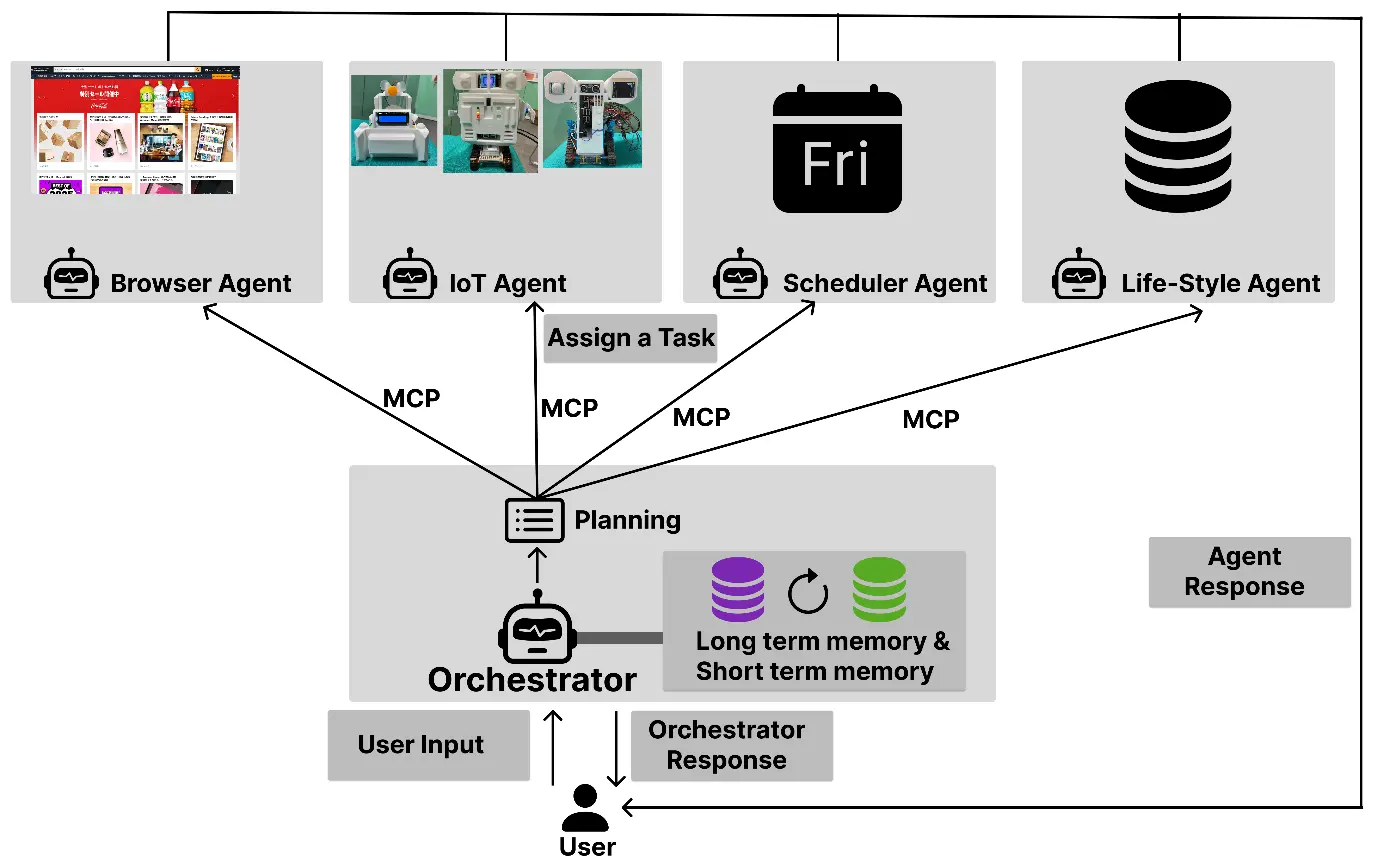

I designed, implemented, and evaluated an autonomous system that converts ambiguous natural language instructions into executable tasks across web operations, IoT control, knowledge retrieval, and scheduling.

Slides (English): NCSP-Presentation-EN.pptx

Open PresentationBuilt a system that can execute ambiguous requests like "do it as usual" across both web and IoT tasks.

Integrated a memory-enabled orchestrator with four specialist agents (Browser / IoT / Life-Style / Scheduler).

Designed a robust Plan → Execute → Review loop with automatic retries for failed tasks.

Standardized capabilities via MCP and implemented hierarchical inference using both cloud and edge LLMs.

| Metric | Result | Impact |

|---|---|---|

| Integrated score (10 scenarios) | 15 (no memory) → avg 25.0 (with memory) | About 1.7x improvement (+67%) |

| Follow-up questions | Baseline total 2 → memory-enabled personas total 0 | Lower user burden |

| Edge inference speed | CPU 9.64 tok/s → GPU 24.76 tok/s | About 2.57x faster |

| Personalization example | Used address, allergy, and preference data to automate search/recommendation/scheduling | Demonstrated practical context awareness |

LangGraph, MCP, Function Calling, RAG (LangChain + FAISS), dynamic model routing.

Python, Flask, REST API, SSE, SQLite, Docker, async job queue, Chrome CDP.

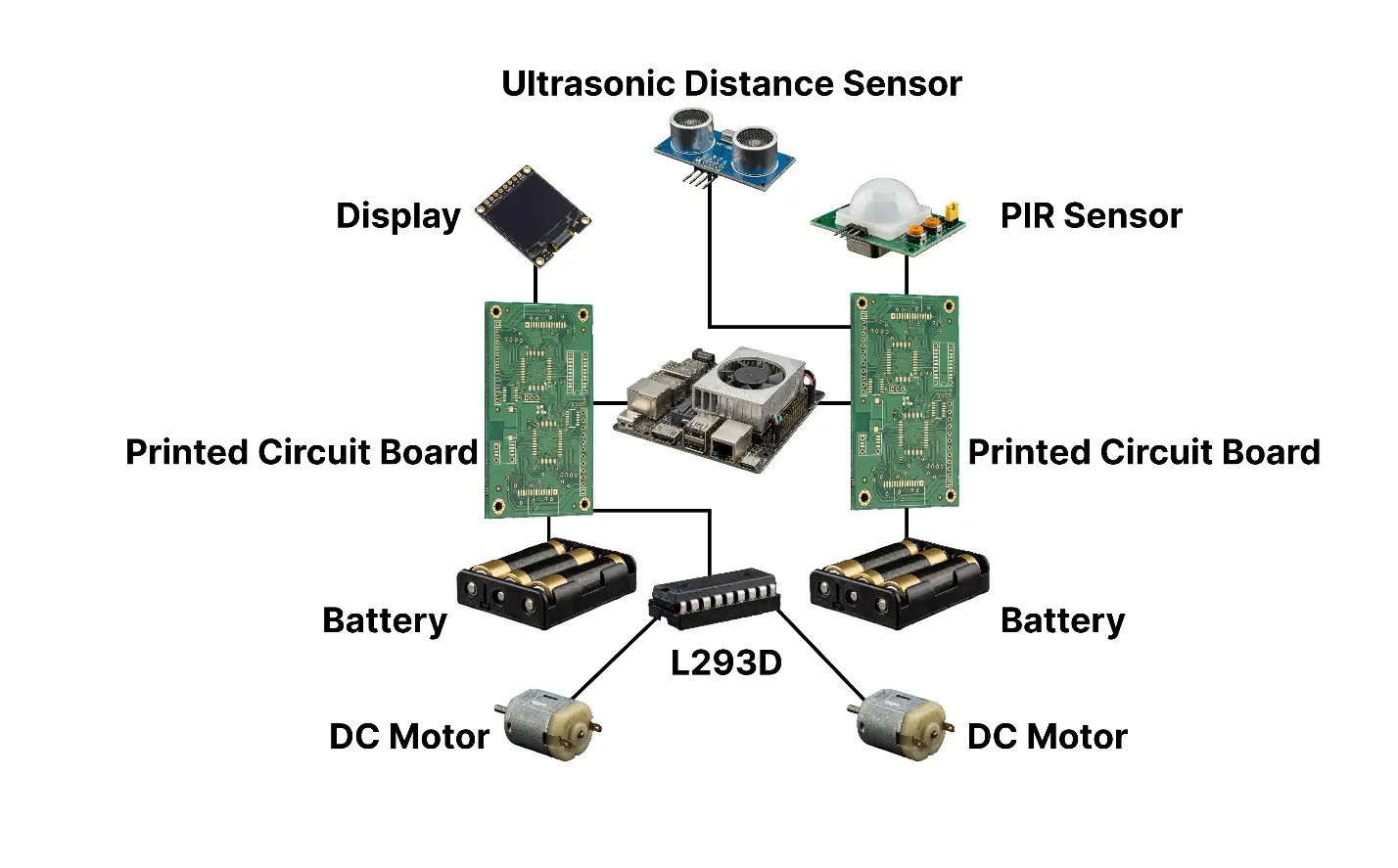

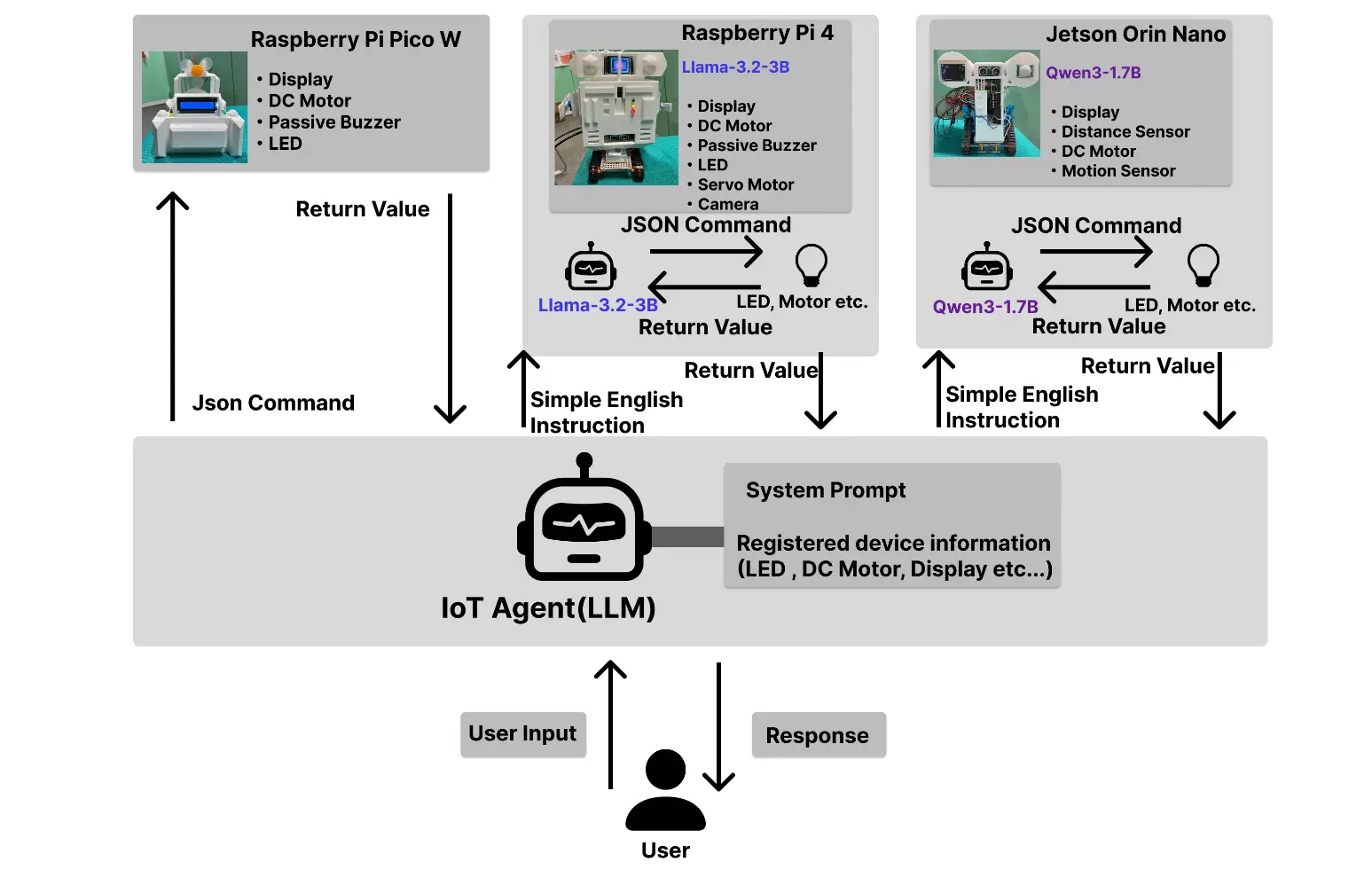

Integrated Jetson Orin Nano, Raspberry Pi 4, and Raspberry Pi Pico W with cloud-edge role separation.

End-to-end execution from ambiguous requirement analysis to API design, evaluation, and performance improvement.

Overall architecture: one orchestrator coordinates memory and four specialist agents to execute end-to-end tasks.

Separates long-term profile memory and short-term context memory for better personalization.

Jetson and Raspberry Pi devices connected through a unified control interface.

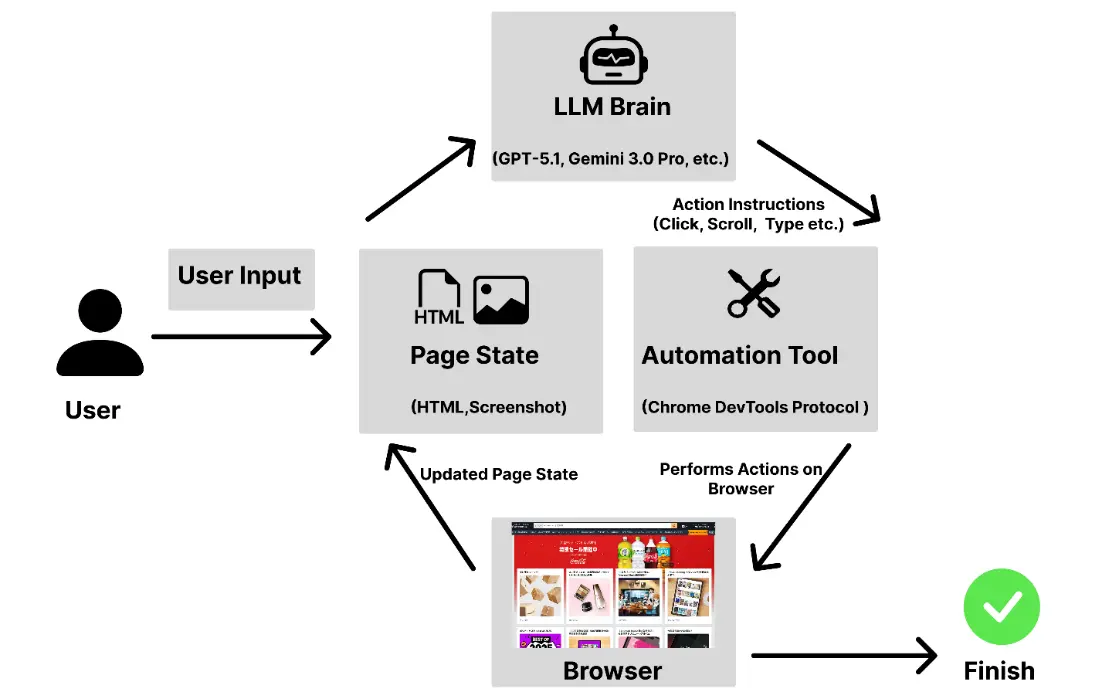

Handles web navigation, search, and multi-step UI operations.

Translates natural language into device-specific commands.

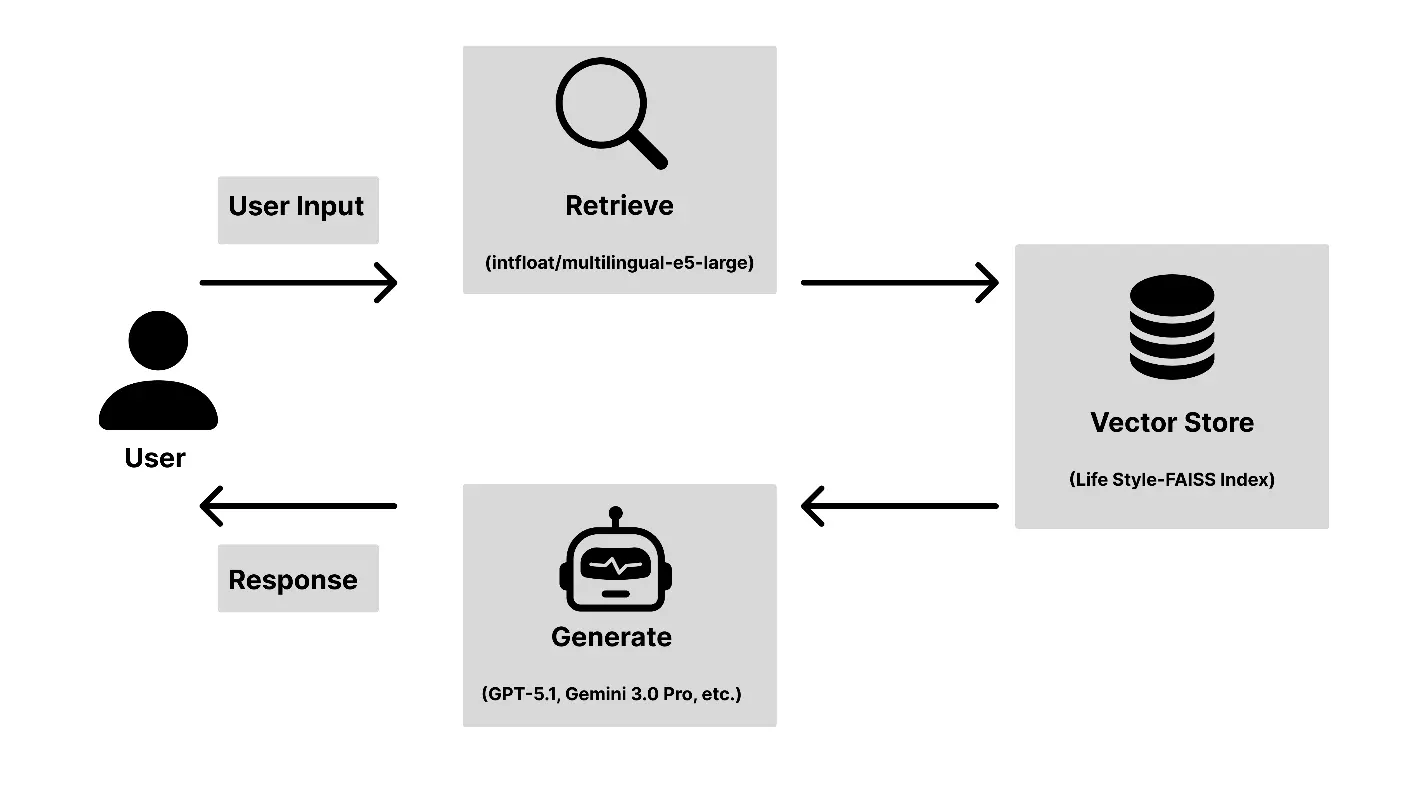

Uses RAG to provide grounded, context-aware daily support answers.

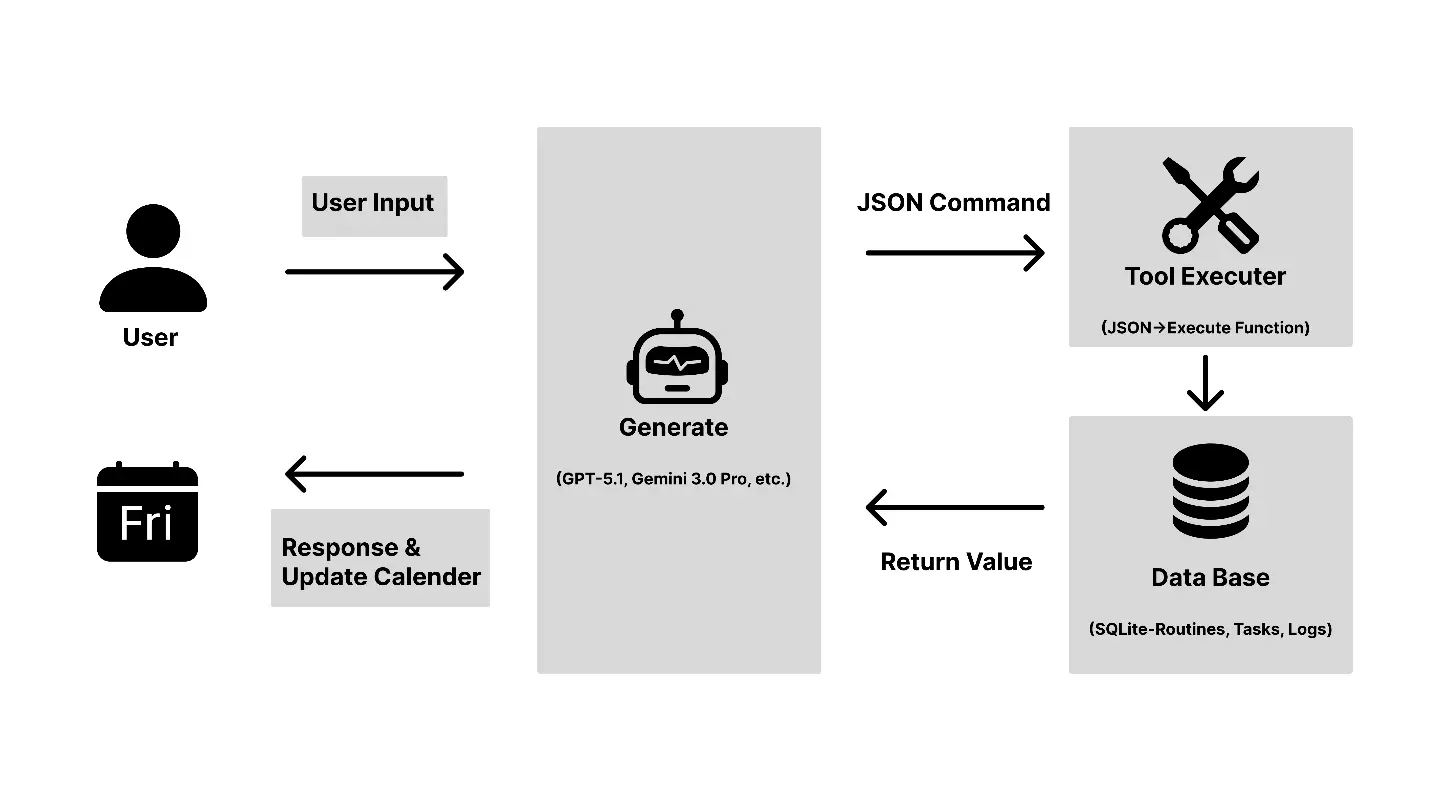

Manages tasks and routines through natural-language interactions.